Google Search Console Team Issues Warnings For JavaScript & CSS Blocking – What It Is and How To Fix It

Now Google Warning about JavaScript for Top SEO Page Ranking

On the morning of June 28th, 2015, webmasters all over the world found themselves inundated with email inboxes full of fresh errors from Google Webmasters. As a top SEO agency, we like getting notices from Google because they are into Google’s proprietary algorithm for getting higher page rankings.

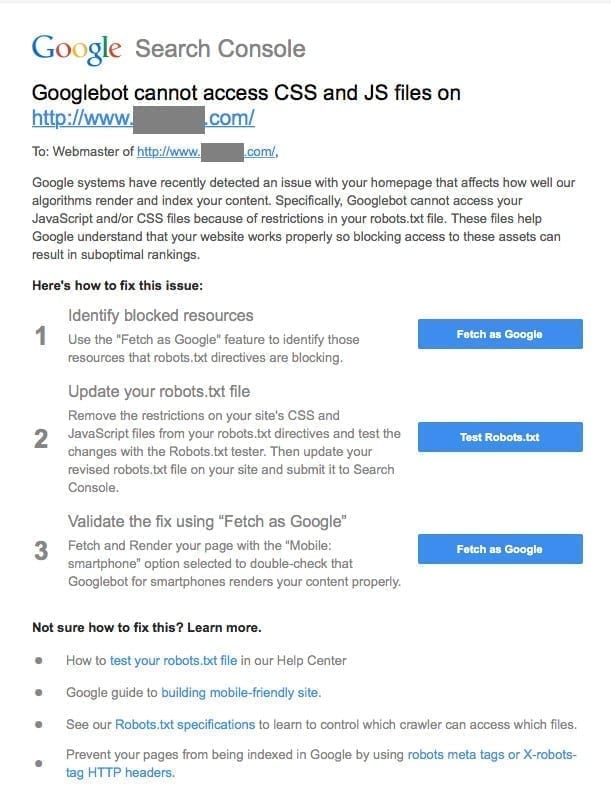

Entitled, “Googlebot cannot access CSS and JS files”, the messages to SEO agencies lead with this rather cryptic statement:

Google systems have recently detected an issue with your homepage that affects how well our algorithms render and index your content. Specifically, Googlebot cannot access your JavaScript and/or CSS files because of restrictions in your robots.txt file. These files help Google understand that your website works properly so blocking access to these assets can result in suboptimal rankings.

Our SEO team thought there may have been something going on with our servers, but when we started looking around, we realized we weren’t alone. Like other SEO agencies and webmasters, we scrambled to understand what it was and why they were getting these messages all of a sudden to keep our clients in the best possible position. We got clarity pretty quickly and are passing along what we shared with our SEO clients.

So what happened, exactly?

As part of the mobile-friendly SEO algorithm update in April, Google also made changes to their webmaster guidelines, asserting that all CSS and JavaScript files must be able to be crawled by Google. Some configurations enabled by default in WordPress (or may have been manually added to your site’s robots.txt) file may be inadvertently blocking some of these resources, and until now, it was a standard acceptable practice.

How do we fix it on our site?

The first thing to do is to jump into Google Webmasters and check the Google Index Blocked Resources subsection. Alternately, you can go to the Crawl Fetch As Google page in Google Webmasters.

Some legacy CMS settings like WordPress and WordPress plugin settings may be blocking these resources by default, so look closely at the resources along with your robots.txt file and clean up any identified problems. Re-run the test to ensure the problems have been cleared and you’re good to go.

If you or your webmaster received this message, we and other top SEO agencies encourage you to take action for your website and make sure the team in charge of your website resolves this as soon as possible.

As always, if you don’t feel comfortable tinkering with files on your server, let SEO professional teams like ours at NicheLabs take care of these for you.